As the paradigm shifts more to container workloads and microservices, Webjet was looking for a way to deploy containers as well as manage them. In part one we dived into the journey of microservices, our traditional Azure Web App architecture and how we started adopting container workloads. We learnt to write systems in golang and dotnet core, how to write Dockerfiles and build up a series of base images. Most importantly we built the foundation of what’s required to build and manage containers effectively.

This era of our container journey plays a big role in how things turned out. When we started looking at container orchestrators, there were only a few and not all of them were production ready. If you read our blogs you should know by now that Microsoft Azure is our “go to” platform, so it is where we started. At the time (late 2016), the most production ready platform was DC/OS . Kubernetes was not released yet and Docker Swarm was in private preview. For us, the orchestrator needed one key feature..

Run my container and keep it running!

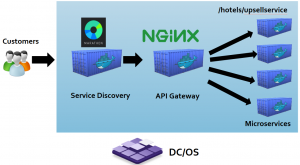

The main challenge was building a CI/CD pipeline that would plug into a container orchestrator and have a service discovery mechanism, so we could route traffic from the customer’s browser, to the individual containers, no matter where they were running. We wanted to be platform agnostic, so we could run on any orchestrator. The good things about every orchestrator, is that they generally provide built in service discovery and have a method of defining an “Ingress” (Where network traffic enters) through a public IP address.

Batman’s Operating System

For DC/OS, it was Marathon and NGINX:

It serves the purpose of “Ingress” and has a public IP address. Customer traffic arrives at Marathon, and it can find other containers inside the cluster without having to know private IP addresses. Marathon routes traffic to our own customised Nginx container, which in turn serves as the API gateway. The API gateway routes to the correct container based on its URL path and terminates SSL traffic and sends traffic privately to microservice containers.

Ask Jeeves

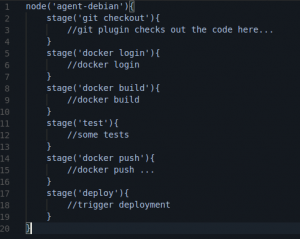

To solve the CI/CD piece, we turned to the popular Jenkins build tool. One key feature that Jenkins provide is ability to write pipeline as code .

Writing a declarative pipeline definition as code allowed the team to have version control for their CI/CD pipeline side by side with the code. It also means no one must manually create pipelines with the web user interface. Pipelines can be parameterised and re-used across new microservice implementations. This allows us to move faster when implementing new services and we don’t have to spend time designing the pipeline from scratch. The Pipeline file defines the CI/CD process and the Dockerfile defines the application and its dependencies. These two files form the ultimate source of truth and allows for a fully automated deployment platform where the source of truth is in the source control repository and not in the snowflake environment.

Once we had these two components in place, CI taking care of the image building and pushing to Azure Container Registry, CD taking care of deployment to DC/OS and Marathon taking care of service discovery, we had a foundation in place to deploy our first production service.

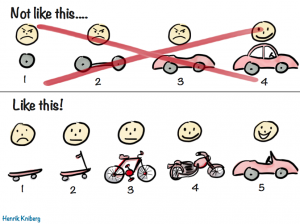

Webjet chose a small, isolated, non-critical piece of functionality which we pulled out of the legacy monolithic stack and containerised. It became the canary that would test out the container CI/CD and orchestration system.

One thing we were not satisfied with, was the lack of secret management in the open source version of DC/OS. This version did not support secret management which at the time was an enterprise-only feature. We wanted to avoid enterprise agreements and vendor lock ins our docker environment. We preferred the ability to lift and shift to various orchestrators when the need arises. Our apps needed to be cloud native, and therefore run anywhere.

Capt’n Kube to the Rescue

Roughly a week into production, Microsoft announced Kubernetes general availability on the Azure Container Service platform (ACS)*. During this time, containers were a new thing on Azure. For us being new to this as well, we were fortunate enough to mature alongside the platform as Kubernetes, which itself was just over 2 years old. We were able to leverage our relationship with Microsoft and worked together with open source teams at Microsoft and share experiences of the journey. Though these close ties we ensured that our roadmap aligned with that of Microsoft and the Kubernetes upstream.

Microsoft alignment with the upstream Kubernetes community and their massive contribution to open source is what got us excited about Kuberenetes. We could finally build a microservice stack on a cloud agnostic and cloud native platform. It can literally run anywhere.

Our next move was to deploy a mirror of what we had on DC/OS, but this time use Kubernetes as the platform. The benefits of our initial CI/CD process were realised, and we seamlessly plugged into the new platform. We replaced Marathon and the Nginx API gateway with Kubernetes Ingress controller. Ingress takes care of service discovery and URL path routing within the cluster. It also runs through a public IP address and operates at the edge of the cluster for receiving inbound customer traffic.

With CI/CD in place we could deploy our non-critical microservice to this cluster and the services were accessible by the customer over the same URL.

The traffic flow looked like this:

[domain.webjet.com.au] —(DNS routing)–> [Azure Load Balancer] —> [Kubernetes Ingress Controller] —(URL routing /api/hotels/upsell)—–> [microservice]

Once tested, all we changed was the endpoint where the domain name was pointing (from the DC/OS IP to the Kubernetes Azure Load balancer IP) and traffic started to flow to the new implementation. We switched over from DC/OS to Kubernetes within a week after we went live. How dope is that?

You’re probably thinking, “how are you monitoring these containers and VMs?”

In Part 3, we will look at logging and monitoring and how Webjet embraced open source tools to simplify the entire monitoring and observability process.

Until next time!

* One thing to note is that ACS was not the managed Kubernetes version (AKS) we know of today

No comment yet, add your voice below!